How Neural Magic Turns Standard CPUs into AI Engines: CEO Brian Stevens

In the midst of the booming adoption of generative artificial intelligence, a company is taking the road less traveled to the point of making these improvements available to all kinds of enterprises. Massachusetts-based Neural Magic, a software-based AI innovator, is the pioneer of the move to cut off the reliance on high-cost and specialized machines for deep learning workloads.

At the center of this revolution is the CEO of Neural Magic, Brian Stevens. He has been in technology for over thirty years and has worked in different roles, including senior positions at Google Cloud and Red Hat. Stevens is leading a charge that has the potential to radically change the way enterprises bring AI to their operations and even the way they scale the technology.

Leadership and Vision: A New Path for AI Accessibility

Brian Stevens has the experience and skills that are needed for his current position. He has been a part of the tech world for a long time and has been involved in different areas, such as guiding complex engineering teams and pioneering cloud technologies. His focus is on eliminating infrastructural bottlenecks such that teams can integrate generative AI into their applications without prohibitive cost or technical complexity.

Stevens believes that the innovation in AI should not be limited only to large tech companies or a few select organizations that can afford it. Rather, Neural Magic is making an effort to open the access gates high enough for businesses to utilize the cheap CPUs (the standard processors that are already available in most computing environments) to do AI tasks that are considered advanced.

Rethinking AI Infrastructure: Beyond GPUs

In the past, high-performance AI workloads have depended mainly on graphics processing units (GPUs) or specialized accelerators like tensor processing units (TPUs). Even though these hardware components work wonders during parallel computations, they also come with certain drawbacks: hardware costs are exorbitant, power consumption is enormous, and dealing with the respective hardware comes with technical difficulties in terms of deployment and maintenance.

Stevens indicates that these limitations make generative AI very costly for smaller companies or departments that cannot afford to set up extensive GPU farms. On the other hand, Neural Magic’s software creates a totally different picture of how AI inference, the technique of activating a trained model to produce predictions, can run on hardware that is already in place, specifically CPU infrastructure, with the same speed of operation as if it were on a GPU.

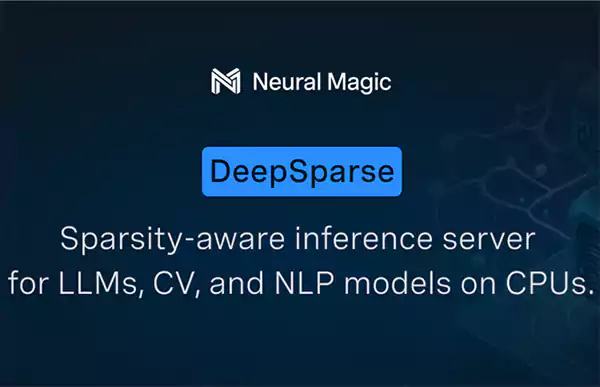

Software-Delivered AI: The DeepSparse Approach

Neural Magic’s biggest asset is its DeepSparse inference runtime, which is a software framework that enables high-performance deep learning on ordinary CPUs. This architecture does not rely on external accelerators but uses sophisticated model pruning and compression techniques to reduce neural networks to their most necessary parts.

The result shows that the execution is quicker, the deployment footprint is wider and the memory usage is less. Steven labels this method as unlocking AI without friction, which means that developers can easily add generative models into applications via standard tools such as Python APIs.

Looking Ahead: An Open, Efficient AI Future

For Stevens, the larger mission remains: to tear down the walls of generative AI, let it spread to as many organizations as possible. Neural Magic, by focusing on software efficiency and taking advantage of the existing hardware ecosystems, is putting itself in the right place to be a major player in making advanced AI cheaper, more flexible, and widely available.

Neural Magic’s choice of a smarter software approach instead of a hardware race in the market highlights another path. Embracing AI as an integral part of their strategy, companies will most likely adopt the software-first approach that has become synonymous with sustainable innovation in the coming years.