Data Mining That Turns Healthcare Data Into Practical Insight

Jump To Key Section

- What Does Data Mining Mean in the Healthcare Context

- Why Healthcare Data is Uniquely Difficult to Mine

- High-Impact Use Cases for Data Mining in Healthcare

- The Foundation: Data Quality, Standardization, and Integration

- Feature Engineering: Translating Clinical Reality into Usable Signals

- Unstructured Data: Mining Clinical Notes Responsibly

- From Patterns to Action: Embedding Insights Into Workflows

- Ethics, Bias, and Safety in Healthcare Mining

- A Note on Kodjin

- How to Get Started with a Practical Mining Roadmap

- The Bottom Line

Are you wondering how data mining in healthcare turns healthcare data into practical insights? Well, the answer is here.

Among the various data mining classifiers, the decision tree was the best classifier with 93.62% accuracy in breast cancer.

Therefore, this article aims to target how data mining can turn healthcare into practical insights, covering the foundation, features, patterns, and actions involved, and more!

Key Takeaways

- What does data mining mean in the healthcare context

- Why healthcare is uniquely difficult to mine

- High-impact use cases for data mining in healthcare

- The foundation: data quality, standardization, and integration

- Feature engineering: translating clinical reality into usable signals

- Unstructured data: mining clinical notes responsibly

- From patterns to action: embedding insights into workflows

- Ethics, bias, and safety in healthcare mining

- A note on Kodjin

- How to get started with a practical mining roadmap

What Does Data Mining Mean in the Healthcare Context

Data mining is a process of discovering patterns, relationships, and predictive signals in large datasets.

In healthcare, it often means identifying factors that are linked to outcomes (readmissions, complications, no-shows), segmenting populations into meaningful cohorts, detecting anomalies (fraud, unusual utilization), and forecasting future events (risk progression, demand).

Data mining can at times include a wide range of techniques:

- Clustering

- Classification

- Regression

- association rule mining

- natural language processing for clinical notes

- time-series analysis

- and anomaly detection.

Why Healthcare Data is Uniquely Difficult to Mine

There is a set of problems that occur while mining healthcare data. These involve :

- Fragmentation: A patient’s journey is often spread across multiple systems and organizations. Even within one hospital, different departments may store information differently.

- Inconsistency: The same concept might be coded in different ways, units may vary, timestamps may be missing, and documentation practices differ by clinician.

- Unstructured data: Many clinically important details live in free-text notes, discharge summaries, and narrative reports.

In healthcare, technical capability is not enough—trust, compliance, and safety are part of the method.

High-Impact Use Cases for Data Mining in Healthcare

In order to have a high impact, cases are analysed in data mining. This involves a fewcommonly used cases such as :

- Cases of risk prediction: Organizations mine historical data to identify patients at higher risk of readmission, deterioration, or complications.

The goal is to prioritize interventions—follow-up calls, medication reconciliation, additional monitoring—before problems escalate.

- Population health management is another major area: Data mining helps to identify the cohorts that are not meeting care targets. (for example, chronic condition control),

- Operational optimization is another overlooked but highly valuable aspect as well.

- For payers and payviders, claims and utilization mining support cost-of-care insights, fraud and abuse detection, and targeted care management.

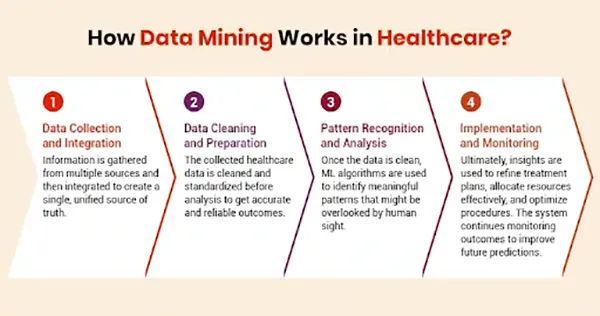

The infographics further entail how data mining functions in health care :

The Foundation: Data Quality, Standardization, and Integration

Healthcare data mining cannot succeed on poor data. The three factors that need to be considered are :

- Data Quality

- Standardization

- Integration

Before any modeling, organizations need to integrate sources, resolve patient identity, and standardize key fields to build consistent structures and terminologies.

Data standardization, therefore, matters because it enables apples-to-apples comparisons.

Note: If lab tests are coded inconsistently or units vary, trends become misleading. If diagnoses are not normalized, cohort definitions break. If medication data is incomplete, safety analyses are distorted.

Integration also includes maintaining provenance: knowing where each data element came from and when it was recorded.

Without provenance, clinicians can’t trust outputs, and analysts can’t troubleshoot inconsistencies, which will make the entire process a lot more difficult.

Feature Engineering: Translating Clinical Reality into Usable Signals

In healthcare, feature engineering often matters as much as the algorithm does. Raw data needs to be transformed into meaningful variablesfor it to be useful:

Like trends in labs rather than single values, medication changes over time, and comorbidity indices, etc.

Clinical context is crucial, as A single lab value might be less informative than its entire trajectory. A medication list, thus, might matter less than a recent change.

This is the reason the best models reflect how clinicians reason—patterns over time, combinations of factors, and changes in status.

Unstructured Data: Mining Clinical Notes Responsibly

A large portion of valuable clinical information sits in narrative text. Symptoms, social context, clinician reasoning, and nuances of severity often appear in notes rather than structured fields.

NLP can extract signals from these documents, such as mentions of fall risk, adherence challenges, or social determinants, to come up with the best results.

But NLP also adds risk. Errors in extraction can create false signals. That’s why text-mining outputs need validation, explainability, and careful monitoring for complete results.

In many cases, NLP works best as an assistive layer—augmenting structured data rather than replacing it.

From Patterns to Action: Embedding Insights Into Workflows

Mining results are useless if they don’t change behavior.

The most successful programs deliver insights where decisions happen:

- care management queues

- quality improvement reviews

- operational huddles

- or clinician-facing tools

Overly complex black-box outputs often fail adoption, even if accuracy looks good in a report, but ultimately they could turn in a failure.

The best design is focused. Instead of flooding teams with alerts, systems should prioritize the most actionable cases and provide clear next steps.

Ethics, Bias, and Safety in Healthcare Mining

Healthcare datasets reflect real-world inequities: access issues, underdiagnosis in certain populations, differences in documentation, and uneven follow-up as well.

Data mining can unintentionally reinforce these patterns if models are trained without careful evaluation, leading to a backlog.

Responsible practice includes validating results across demographics, monitoring for drift over time, and setting clear governance around how outputs are used.

Privacy protections also matter in such cases: minimizing unnecessary data exposure, enforcing least-privilege access, and maintaining audit trails.

In healthcare, the goal is not just “better predictions.” The goal is safer, fairer decisions supported by trustworthy data.

A Note on Kodjin

Kodjin is known for work in healthcare interoperability and FHIR-based solutions, which connect directly to successful data mining programs, making it very significant.

When clinical data is structured consistently and integrated through standards-driven pipelines, analytics and mining become easier to scale and validate.

Strong data modeling and interoperability foundations reduce the amount of manual cleanup and re-mapping, reducing the workload.

And, allowing teams to focus more on discovering reliable patterns and less on fighting data fragmentation.

How to Get Started with a Practical Mining Roadmap

In order to begin with, start with a focused use case that has clear ownership and measurable outcomes—such as reducing readmissions, improving chronic care control, cutting no-shows, or optimizing discharge planning to have a practical mindmap.

Secondly, ensure the data foundation is strong enough: define cohorts, validate data completeness, standardize key fields, and establish governance.

Building simple models first and validating with real stakeholders. Often, a transparent baseline model plus a well-designed workflow can outperform a complex model that nobody trusts.

Lastly, Track results, monitor bias and drift, and iterate based on outcomes.

Over time, expand the program: add more sources, incorporate longitudinal features, explore NLP for notes, and improve intervention pathways.

The Bottom Line

Healthcare is full of patterns that can improve care, but extracting those patterns requires discipline.

Thus, data mining in healthcare works best when it’s grounded in standardized, high-quality data; designed for explainable, actionable outputs; and governed responsibly for safety and fairness.

Ans: Data mining, a subfield of artificial intelligence that makes use of vast amounts of data in order to allow significant information to be extracted through previously unknown patterns, has been progressively applied in healthcare to assist clinical diagnoses and disease predictions

Ans: The key types of data mining are as follows: classification, regression, clustering, association rule mining, anomaly detection, time series analysis, neural networks, decision trees, ensemble methods, and text mining.

Ans: Healthcare analytics empower medical professionals to holistically examine patient data, including medical history, lab results, vital signs, and lifestyle factors to create a health profile.

Ans: Natural Language Processing (NLP) deals with creating computational techniques to process and comprehend natural languages.